As well as launching our staff tracker this week, we’re putting another idea to the test. Alongside the standard staff survey we’ll be offering a mini survey with around half the questions. The 12 providers who are involved in the staff pilot will be assigned one of two options – litre or pint-sized – to trial with a number of teaching staff. By piloting both at the same time we hope to find out:

- How much actionable data is lost by using only half the questions

- What is gained in terms of ease of use, engagement, and ease of analysis

- Actual time/cost savings from running a smaller survey with no customisation

- Whether there are sector or other differences that make different versions preferable in different settings

Depending on the results, we’ll consider trialling a mini survey for students too. And of course we’ll be asking pilots to have their say.

Why mini surveys?

Surveys occupy precious resources. We are constantly trying to balance the value of their feedback with respect for staff and student time. We also know how much time our institutional leads devote to running the tracker and analysing the data. Ideally, mini surveys can achieve many of the same outcomes in less time. Even if they don’t work for everyone, they may be useful as a complement to the standard survey, perhaps to run in alternate years or as a low-risk starting point for new institutions.

Users of mini trackers will be able to benchmark their findings with other organisations in their sector, just as users of the standard tracker can do. It won’t be possible to include standard and mini users in the same benchmarking group, even where they are in the same sector and using the same questions. However, it will be possible to compare data across the two surveys outside of BOS, as we will do our own cross-analysis and publish the combined results. So users can if they wish benchmark their findings with the wider group of respondents.

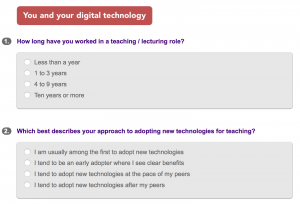

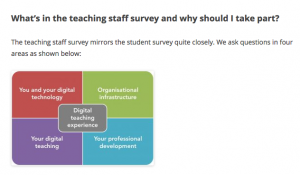

What’s in the mini surveys?

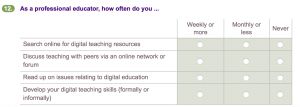

We’ve chosen equivalent questions from the staff and student trackers to include in the mini trackers. They are questions that map well across the two different user groups and in the case of the student tracker they are questions with proven value.

You can now try out the teaching staff trackers online: standard staff tracker | mini staff tracker

These are open access test versions: you can take them as often as you like. Asterisks show where questions are substantially the same as in the student tracker, which you can also try out here: standard student tracker (HE version). The mini student tracker will be available shortly.

We welcome feedback on the staff questions using this googledoc pro-forma, as we will be making improvements after the first pilot.

Further support for teaching staff

There is now a landing page for teaching staff to explain more about the tracker and how it’s being used. This is partly for teachers who encounter the staff survey in the 12 pilot institutions, and partly so that teaching staff at any institution can understand more about the project. As outlined in the previous post, teachers need to know that their viewpoint is valued. And teachers are often the gatekeepers to student engagement. You’ll notice that we’re moving away from calling this project the ‘tracker’ because of negative associations with monitoring. Instead we are using its proper title going forward: ‘Student digital experience service’.

We welcome feedback on this page. It’s not meant as a substitute for local messages and engagement activities, and it doesn’t appear in menus. Organisational leads can decide whether or not to promote it, and whether or not to use some of the material in local communications.

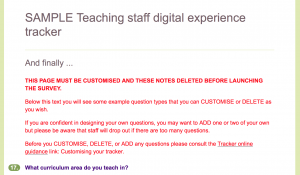

More on the staff tracker: custom questions

One of the most-liked features of the student tracker is the option to include custom questions. This means organisations can maximise the value of engagement and reduce survey load. Custom questions can be added to the standard versions of both the student and teaching staff trackers, and we include guidance to ensure the survey experience is seamless for users and the data collected is of a high quality.

Our parallel experience of developing the Digital discovery tool and talking to users about what they want from it has really helped us to appreciate the value of custom questions in the tracker. The discovery tool provides individuals with reflection and feedback. But we know that at an organisational level there’s a need for accurate data about issues such as:

- what proportion of staff are proficient users of organisational systems e.g. Echo360, sharepoint etc

- what forms of training and development staff want

- what features of the VLE are used by staff (perhaps tracking differences across subject areas)

- how staff behaviour and/or attitudes change over time or over the course of a strategic initiative

- how digital practice differs across the organisation

BOS is the perfect tool to answer these questions as it designed to provide accurate data from anonymous survey responses and summarise it it at volume. Project leads will be able to add in exactly those questions they need to answer and can compare the answers over different broad groups of staff, or track them over time. Some of the questions asked in the standard staff tracker can also be used as key indicators.

Lightweight guidance on using the staff tracker will be produced in early March and circulated on the jiscmail list as well as on this this blog.